Rabia Akhtar

The ongoing conflict between Russia and Ukraine has put the spotlight on the ominous specter of nuclear weapons and their potentially horrifying use in the present era. Recent displays of nuclear signaling and threats from Russia have starkly revealed that the age-old taboo surrounding these weapons no longer serves as an impenetrable deterrent against their utilization.

Since the devastation of Hiroshima and Nagasaki in 1945, the non-use of nuclear weapons has bred complacency among global leaders and the general public. However, this complacency belies the ongoing dangers of these deadly weapons. The current situation in Russia and Ukraine serves as a stark reminder of the precariousness of nuclear deterrence. As the conflict unfolds, it becomes increasingly clear that the decision to employ nuclear weapons is not solely a military one; it carries significant political implications. Crossing the threshold into the use of such devastating weapons entails weighing the risks of escalation, potential international backlash, and the unprecedented humanitarian disaster that could ensue. The threat of nuclear conflict never truly dissipates – it looms as a constant reminder of the delicate balance we must maintain. But that much has long been established. It is intriguing that deterrence, despite being a theoretical concept that has not undergone testing, holds the entirety of humanity’s belief resting in this one maddening paradoxicality.

Lately, I have been thinking about the evolution of deterrence, which in the face of threats from emerging technologies, does not seem to be all that evolved. Why do we continue to put all our eggs in the deterrence basket? Despite the increasing sophistication of threats to deterrence, which has widened the gap in capabilities between hostile nuclear dyads, why hasn’t deterrence evolved with equal or even greater sophistication? Why hasn’t the U.S. been questioned by its allies on the credibility of its extended deterrence commitments in the age of AI considering the urgency and lack of preparedness?

As we witness the disruptive impact of emerging technologies, with their unknown and limitless consequences, it becomes imperative to scrutinize the viability of any extended deterrence arrangement and necessitates a thorough reevaluation. The rapid evolution of emerging technologies has indisputably transformed our world and therefore, the nature of extended deterrence obligations must adapt accordingly. While extending America’s nuclear umbrella to allies has long been a cornerstone of U.S. foreign policy, its feasibility is now in question due to heightened costs in the age of AI compared to the early postwar era, both for the U.S. and its allies. Given the increased costs, why should the U.S. persist in assuring its allies that it will continue to fulfill its extended deterrence commitments, at the continued expense of its own cities and citizens? Why should it continue to be acceptable to U.S. citizens that they be held hostage to a future nuclear strike by China for what happens in Taiwan? Or by North Korea for what happens in the Korean Peninsula? Or a threat emanating from the Asia-Pacific for that matter? It’s increasingly becoming a more lose-lose probability than a win-win!

So let’s think out loud!

What if the U.S. decides today that it will no longer deter aggression with its potential to use nuclear weapons to safeguard its allies including NATO? With an already reduced arsenal, what would it do to the credibility of the U.S. as an ally? Is that credibility more important to the U.S. than putting its citizens in harm’s way by continuing to be willing to absorb a first strike, be it intentional, unintended or accidental? Could the U.S. explore alternative methods to reassure allies of its commitment to their security, instead of resorting to nuclear threats and destruction?

If the U.S. were to dissolve the extended deterrence obligations to South Korea, Japan, and Taiwan, would it push these countries to find arrangements to safeguard their sovereignty by building nuclear weapons? Was Waltz right all along? Will more nuclear weapons make the world a safer place than it is right now?

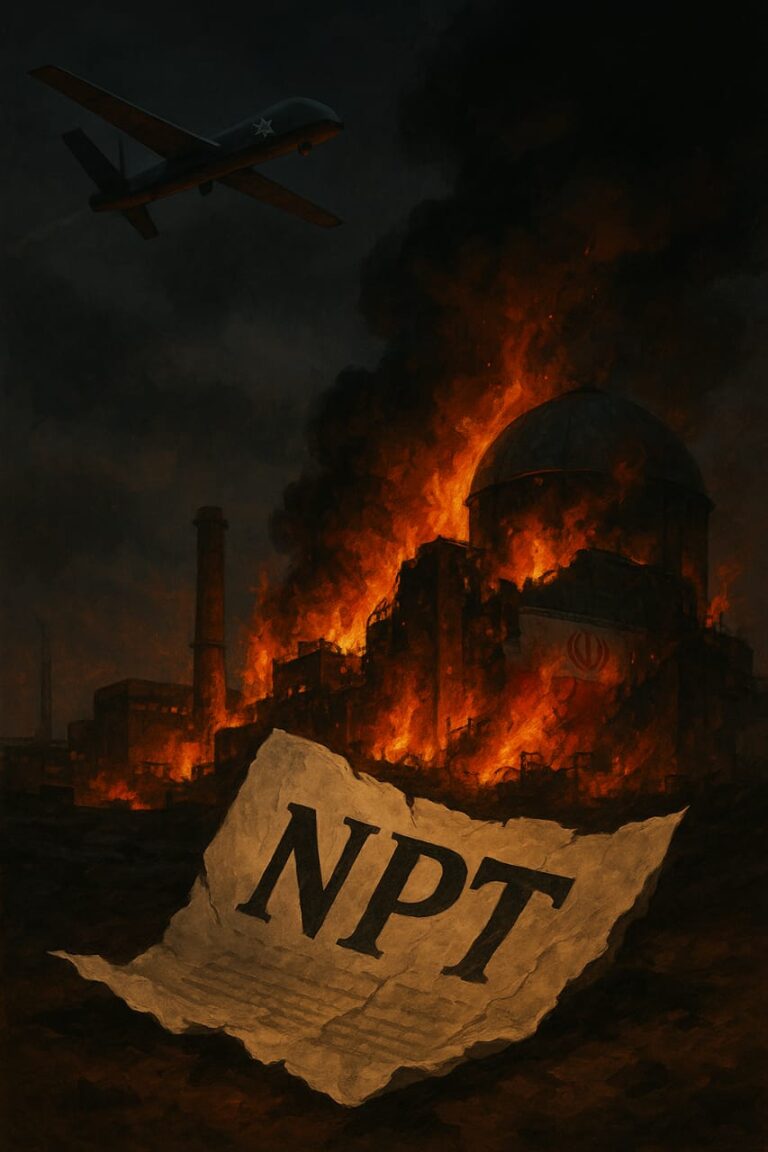

Will China stop competing with the U.S. if it knows the U.S. will not interfere with China’s plans for Taiwan? Will North Korea stop building more ICBMs putting U.S. cities on target if it knows that the U.S. will not interfere with the reunification of Korea? Will this push South Korea to develop nuclear weapons of its own? Will this unravel the NPT? Will Kennedy’s prophecy of 25 or more nuclear weapon states come true? Will the domino effect deliver us a nuclear Iran, a nuclear Saudi Arabia and a nuclear Turkey?

I don’t have any answers, only questions.

The use of AI in nuclear weapons has amplified the dangers of war, bypassing human decision-making processes that are currently in place to ensure the responsible use of nuclear weapons. Furthermore, cyberattacks and hacking, which are also enhanced by AI, have the potential to disable communication networks and compromise command and control systems, potentially leading to an accidental nuclear launch. We all have known this for quite some time now! Yet, against this backdrop, why is there no serious reconsideration by the U.S. about strengthening its nuclear deterrence and by extension, the extended nuclear deterrence arrangements with its allies? In today’s era of AI, the threats to deterrence have become increasingly formidable and sophisticated. As the world continues to explore the disruptive potential of AI, these threats will only escalate, posing significant challenges to neutralizing the advantage of adversaries’ deterrence strategies.

As technology continues to evolve, the U.S. must engage in a comprehensive dialogue with its allies to revisit the assumptions underlying extended deterrence. This includes acknowledging the necessity of developing new policies and strategic frameworks that are more responsive to the threats and possibilities arising from AI-enhanced capabilities. The extended deterrence policy must undergo revision to keep pace with the changing technological landscape. Meanwhile, there should be a concerted effort to establish international norms and regulations regarding the use of AI in nuclear weapons. This would require countries to adhere to strict standards for AI systems and ensure that they are not used to launch nuclear weapons without human oversight. Additionally, more focus should be on the development of new technologies that could mitigate the risk of accidental or unintentional launches.

The convergence of AI and nuclear weapons poses a profound challenge to the effectiveness of deterrence policy and more so to extended deterrence. As our world rapidly transforms, it becomes imperative to reassess current policies and cultivate innovative strategies to uphold global peace and stability. At this stage, countless questions outnumber the available answers. Yet, we must embrace the quest for solutions and aspire to create a safer world, even in the presence of nuclear weapons.

Prof. Dr. Rabia Akhtar is Director, Center for Security, Strategy and Policy Research (CSSPR), The University of Lahore.